RENMIN UNIVERSITY of CHINA

The research team from the Gaoling School of Artificial Intelligence at Renmin University of China (RUC), in collaboration with Ant Group, officially unveiled the LLaDA-MoE series of models. The new models creatively integrate masked diffusion mechanisms with dynamic sparse activation strategies, marking a significant milestone in the evolution of diffusion-based language models, from dense computation to sparse and efficient architectures.

As a core driving force of artificial intelligence, large language models (LLMs) are profoundly reshaping applications in natural language understanding, intelligent decision-making systems and everyday human-computer interaction. However, traditional autoregressive models, which generate text sequentially token by token, face inherent limitations: slow decoding, difficulty modeling bidirectional dependencies and inefficiency with long sequences.

The research team, led by Wen Jirong and Li Chongxuan, professors at RUC’s Gaoling School of Artificial Intelligence, made a major breakthrough by challenging the long-held assumption that "large language models must be autoregressive". Based on theoretical insights and scaling law exploration, the team proposed that the essence of linguistic intelligence is not bound to autoregressive structures but lies in accurately modeling natural language distributions.

Building on this philosophy, the team and Ant Group jointly released LLaDA 8B in February, the world’s first dialogue-capable diffusion large language model. Since its release, the LLaDA series has attracted widespread attention across academia, industry and the open-source community, witnessing over 400,000 downloads in a single month. Research institutions and companies such as NVIDIA, Apple, UCLA, and ByteDance have cited LLaDA in follow-up work or used it as a benchmark model.

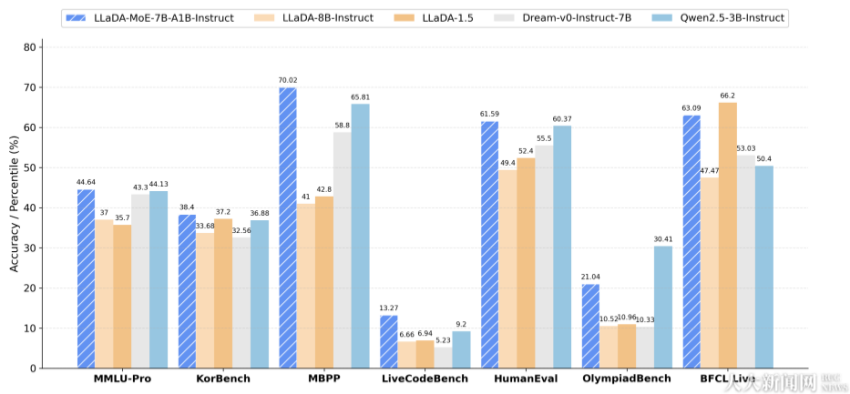

The LLaDA-MoE instruction-tuned model is compared with other diffusion and autoregressive language models across key metrics on various tasks. [Photo/ruc.edu.cn]

Over the following months, RUC and Ant Group continued their collaboration, introducing successive models. The joint team has now taken a further step by extending the diffusion language model architecture from dense to Mixture-of-Experts (MoE), releasing the first native diffusion language model with an MoE architecture — LLaDA-MoE. This marks a dual breakthrough in efficiency and intelligence, ushering in a new era of sparse, parallel and high-performance language modeling.

By achieving deep integration between diffusion language modeling and sparse MoE structures, LLaDA-MoE overcomes long-standing bottlenecks in expressiveness and scalability for non-autoregressive models. Through the combination of mask-based denoising and expert routing mechanisms, it delivers high-quality text generation with minimal computational cost. This innovation establishes a new paradigm of "efficient intelligence" for diffusion-based architectures, paving the way for future scaling and exploration of AI’s upper limits.

Links for the models:

https://huggingface.co/inclusionAI/LLaDA-MoE-7B-A1B-Base

https://huggingface.co/inclusionAI/LLaDA-MoE-7B-A1B-Instruct